It’s time for a review of the second year of operation of our Redflow ZCell battery and Victron Energy inverter/charger system. To understand what follows it will help to read the earlier posts in this series:

- Go With The Flow (what all the pieces are, what they do, some teething problems)

- Hack Week 21: Keeping the Battery Full (an experiment in working around the limitations of a single ZCell)

- TANSTAAFL (review/analysis of the first year of operation)

In case ~12,000 words of background reading seem daunting, I’ll try to summarise the most important details here:

- We have a 5.94kW solar array hooked up to a Victron MPPT RS solar charge controller, two Victron 5kW Multi-Plus II inverter/chargers, a Victron Cerbo GX console, and a single 10kWh Redflow ZCell battery. It works really well. We’re using most of our generated power locally, and it’s enabled us to blissfully coast through several grid power outages and various other minor glitches. The Victron gear and the ZCell were installed by Lifestyle Electrical Services.

- Redflow batteries are excellent because you can 100% cycle them every day, and they aren’t a giant lump of lithium strapped to your house that’s impossible to put out if it bursts into flames. The catch is that they need to undergo periodic maintenance where they are completely discharged for a few hours at least every three days. If you have more than one, that’s fine because the maintenance cycles interleave (it’s all automatic). If you only have one, you can’t survive grid outages if you’re in a maintenance period, and you can’t ordinarily use the Cerbo’s Minimum State of Charge (MinSoC) setting to perpetually keep a small charge in the battery in case of emergencies. As we still only have one battery, I’ve spent a fair bit of time experimenting to mitigate this as much as I can.

- The system itself requires a certain amount of power to run. Think of the pumps and fans in the battery, and the power used directly by the inverters and the console. On top of that a certain amount of power is simply lost to AC/DC conversion and charge/discharge inefficiencies. That’s power that comes into your house from the grid and from the sun that your loads, i.e. the things you care about running, don’t get to use. This is true of all solar PV and battery storage systems to a greater or lesser degree, but it’s not something that people always think about.

With the background out of the way we can get on to the fun stuff, including a roof replacement, an unexpected fault after a power outage followed by some mains switchboard rewiring, a small electrolyte leak, further hackery to keep a bit of charge in the battery most of the time, and finally some numbers.

The big job we did this year was replacing our concrete tile roof with colorbond steel. When we bought the house – which is in a rural area and thus a bushfire risk – we thought: “concrete brick exterior, concrete tile roof – sweet, that’s not flammable”. Unfortunately it turns out that while a tile roof works just fine to keep water out, it won’t keep embers out. There’s a gadzillion little gaps where the tiles overlap each other, and in an ember attack, embers will get up in there and ignite the fantastic amount of dust and other stuff that’s accumulated inside the ceiling over several decades, and then your house will burn down. This could be avoided by installing roof blanket insulation under the tiles, but in order to do that you have to first remove all the tiles and put them down somewhere without breaking them, then later put them all back on again. It’s a lot of work. Alternately, you can just rip them all off and replace the whole lot with nice new steel, with roof blanket insulation underneath.

Of course, you need good weather to replace a roof, and you need to take your solar panels down while it’s happening. This meant we had twenty-two solar panels stacked on our back porch for three weeks of prime PV time from February 17 – March 9, 2023, which I suspect lost us a good 500kW of power generation. Also, the roof job meant we didn’t have the budget to get a second ZCell this year – for the cost of the roof replacement, we could have had three new ZCells installed – but as my wife rightly pointed out, all the battery storage in the world won’t do you any good if your house burns down.

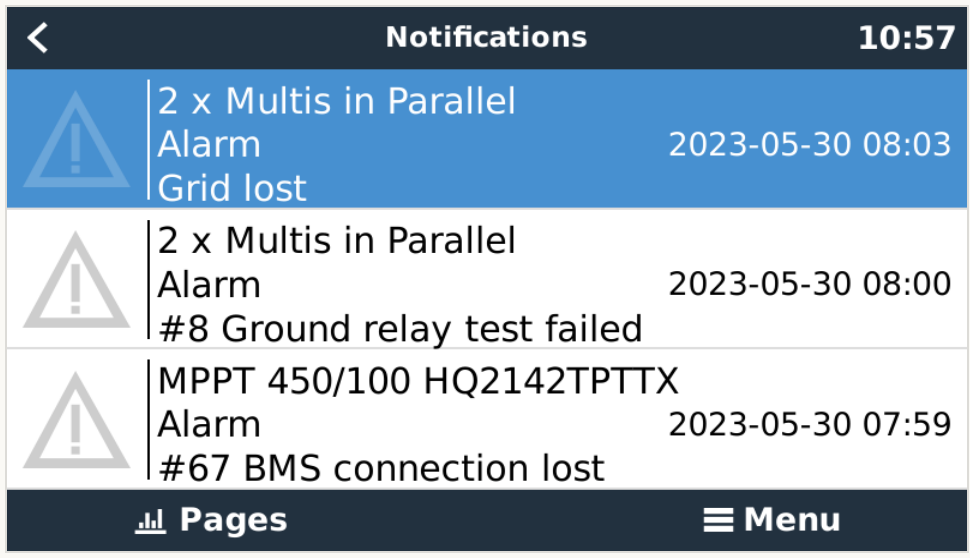

We had at least five grid power outages during the year. A few were brief, the grid being down for only a couple of minutes, but there were two longer ones in September (one for 30 minutes, one for about an hour and half). We got through the long ones just fine with either the sun high in the sky, or charge in the battery, or both. One of the earlier short outages though uncovered a problem. On the morning of May 30, my wife woke up to discover there was no power, and thus no running water. Not a good thing to wake up to. This happened while I was away, because of course something like this would happen while I was away. It turns out there had been a grid outage at about 02:10, then the grid power had come back, but our system had not. The Multis ended up in some sort of fault state and were refusing to power our loads. On the console was an alarm message: “#8 – Ground relay test failed”.

Note the times in the console messages are about 08:00. I confirmed via the logs from the VRM portal that the grid really did go out some time between 02:10 and 02:15, but after that there was nothing in the logs until 07:59, which is when my wife used the manual changeover switch to shift all our loads back to direct grid power, bypassing the Victron kit. That brought our internet connection back, along with the running water. I contacted Murray Roberts from Lifestyle Electrical and Simon Hackett for assistance, Murray logged in remotely and reset the Multis, my wife flicked the changeover switch back and everything was fine. But the question remained, what had gone wrong?

The ground relay in the Multis is there to connect neutral to ground when the grid fails. Neutral and ground are already physically connected on the grid (AC input) side of the Multis in the main switchboard, but when the grid power goes out, the Multis disconnect their inputs, which means the loads on the AC output side no longer have that fixed connection from neutral to ground. The ground relay activates in this case to provide that connection, which is necessary for correct operation of the safety switches on the power circuits in the house.

The ground relay is tested automatically by the Multis. Looking up Error 8 – Ground relay test failed on Victron’s web site indicated that either the ground relay really was faulty, or possibly there was a wiring fault or an issue with one of the loads in our house. So I did some testing. First, with the battery at 50% State of Charge (SoC), I did the following:

- Disconnected all loads (i.e. flipped the breaker on the output side of the Multis)

- Killed the mains (i.e. flipped the breaker on the input side of the Multis)

- Verified the system switched to inverting mode (i.e. running off the battery)

- Restored mains power

- Verified there was no error

This demonstrated that the ground relay and the Multis in general were fine. Had there been a problem at that level we would have seen an error when I restored mains power. I then reconnected the loads and repeated steps 2-5 above. Again, there was no error which indicated the problem wasn’t due to a wiring defect or short in any of the power or lighting circuits. I also re-tested with the heater on and the water pump running just in case there may have been an issue specifically with either of those devices. Again, there was no error.

The only difference between my test above and the power outage in the middle of the night was that in the middle of the night there was no charge in the battery (it was right after a maintenance cycle) and no power from the sun. So in the evening I turned off the DC isolators for the PV and deactivated my overnight scheduled grid charge so there’d be no backup power of any form in the morning. Then I repeated the test:

- Disconnected all loads

- Killed the mains.

- Checked the console which showed the system as “off”, as opposed to “inverting”, as there was no battery power or solar generation

- Restored mains power

- Shortly thereafter, I got the ground relay test failed error

The underlying detailed error message was “PE2 Closed”, which meant that it was seeing the relay as closed when it’s meant to be open. Our best guess is that we’d somehow hit an edge case in the Multi’s ground relay test, where they maybe tried to switch to inverting mode and activated the ground relay, then just died in that state because there was no backup power, and got confused when mains power returned. I got things running again by simply power cycling the Multis.

So it kinda wasn’t a big deal, except that if the grid went out briefly with no backup power, our loads would remain without power until one of us manually reset the system. This was arguably worse than not having the system at all, especially if it happened in the middle of the night, or when we were away from home. The fact that we didn’t hit this problem in the first year of operation is a testament to how unlikely this event is, but the fact that it could happen at all remained a problem.

One fix would have been to get a second battery, because then we’d be able to keep at least a tiny bit of backup power at all times regardless of maintenance cycles, but we’re not there yet. Happily, Simon found another fix, which was to physically connect the neutral together between the AC input and AC output sides of the Multis, then reconfigure them to use the grid code “AS4777.2:2015 AC Neutral Path externally joined”. That physical link means the load (output) side picks up the ground connection from the grid (input) side in the swichboard, and changing the grid code setting in the Multis disables the ground relay and thus the test which isn’t necessary anymore.

Murray needed to come out anyway to replace the carbon sock in the ZCell (a small item of annual maintenance) and was able to do that little bit of rewriting and configuration at the same time. I repeated my tests both with and without backup power and everything worked perfectly, i.e. the system came back immediately by itself after a grid outage with no backup power, and of course switched over to inverting just fine when there was backup power available.

This leads to the next little bit of fun. The carbon sock is a thing that sits inside the zinc electrolyte tank and helps to keep the electrolyte pH in the correct operating range. Unfortunately I didn’t manage to get a photo of one, but they look a bit like door snakes. Replacing the carbon sock means opening the case, popping one side of the Gas Handling Unit (GHU) off the tank, pulling out the old sock and putting in a new one. Here’s a picture of the ZCell with the back of the case off, indicating where the carbon sock goes:

When Murray popped the GHU off, he noticed that one of the larger pipes on one side had perished slightly. Thankfully he happened to have a spare GHU with him so was able to replace the assembly immediately. All was well until later that afternoon, when the battery indicated hardware failure due to “Leak 1 Trip” and shut itself down out of an abundance of caution. Upon further investigation the next day, Murry and I discovered there was a tiny split in one of the little hoses going into the GHU which was letting the electrolyte drip out.

This small electrolyte leak was caught lower down in the battery, where the leak sensor is. Murray sucked the leaked electrolyte out of there, re-terminated that little hose and we were back in business. I was happy to learn that Redflow had obviously thought about the possibility of this type of failure and handled it. As I said to Murray at the time, we’d rather have a battery that leaks then turns itself off than a battery that catches fire!

Aside from those two interesting events, the rest of the year of operation was largely quite boring, which is exactly what one wants from a power system. As before I kept a small overnight scheduled charge and a larger late afternoon scheduled charge active on weekdays to ensure there was some power in the battery to use at peak (i.e. expensive) grid times. In spring and summer the afternoon charge is largely superfluous because the battery has usually been well filled up from the solar by then anyway, but there’s no harm in leaving it turned on. The one hack I did do during the year was to figure out a way to keep a small (I went with 15%) MinSoC in the battery at all times except for maintenance cycle evenings, and the morning after. This is more than enough to smooth out minor grid outages of a few minutes, and given our general load levels should be enough to run the house for more than an hour overnight if necessary, provided the hot water system and heating don’t decide to come on at the same time.

My earlier experiment along these lines involved a script that ran on the Cerbo twice a day to adjust scheduled charge settings in order to keep the battery at 100% SoC at all times except for peak electricity hours and maintenance cycle evenings. As mentioned in TANSTAAFL I ran that for all of July, August and most of September 2022. It worked fine, but ultimately I decided it was largely a waste of energy and money, especially when run during the winter months when there’s not much sun and you end up doing a lot of grid charging. This is a horribly inefficient way of getting power into the battery (AC to DC) versus charging the battery direct from solar PV. We did still use those scripts in the second year, but rather more judiciously, i.e. we kept an eye on the BOM forecasts as we always do, then occasionally activated the 100% charge when we knew severe weather and/or thunderstorms were on the way, those being the things most likely to cause extended grid outages. I also manually triggered maintenance on the battery earlier than strictly necessary several times when we expected severe weather in the coming days, to avoid having a maintenance cycle (and thus empty battery) coincide with potential outages. On most of those occasions this effort proved to be unnecessary. Bearing all that in mind, my general advice to anyone else with a single ZCell system (aside from maybe adding scheduled charges to time-shift expensive peak electricity) is to just leave it alone and let it do its thing. You’ll use most of your locally generated electricity onsite, you’ll save some money on your power bills, and you’ll avoid some, but not all, grid outages. This is a pretty good position to be in.

That said, I couldn’t resist messing around some more, hence my MinSoC experiment. Simon’s installation guide points out that “for correct system operation, the Settings->ESS menu ‘Min SoC’ value must be set to 0% in single-ZCell systems”. The issue here is that if MinSoC is greater than 0%, the Victron gear will try to charge the battery while the battery is simultaneously trying to empty itself during maintenance, which of course just isn’t going to work. My solution to this is the following script, which I run from a cron job on the Cerbo twice a day, once at midnight UTC and again at 06:00 UTC with the --check-maintenance flag set:

Midnight UTC corresponds to the end of our morning peak electricity time, and 06:00 UTC corresponds to the start of our afternoon peak. What this means is that after the morning peak finishes, the MinSoC setting will cause the system to automatically charge the battery to the value specified if it’s not up there already. Given it’s after the morning peak (10:00 AEST / 11:00 AEDT) this charge will likely come from solar PV, not the grid. When the script runs again just before the afternoon peak (16:00 AEST / 17:00 AEDT), MinSoC is set to either the value specified (effectively a no-op), or zero if it’s a maintenance day. This allows the battery to be discharged correctly in the evening on maintenance days, while keeping some charge every other day in case of emergencies. Unlike the script that tries for 100% SoC, this arrangement results in far less grid charging, while still giving protection from minor outages most of the time.

In case Simon is reading this now and is thinking “FFS, I wrote ‘MinSoC must be set to 0% in single-ZCell systems’ for a reason!” I should also add a note of caution. The script above detects ZCell maintenance cycles based solely on the configured maintenance time limit and the duration since last maintenance. It does not – and cannot – take into account occasions when the user manually forces maintenance, or situations in which a ZCell for whatever reason hypothetically decides to go into maintenance of its own accord. The latter shouldn’t generally happen, but it can. The point is, if you’re running this MinSoC script from a cron job, you really do still want to keep an eye on what the battery is doing each day, in case you need to turn that setting off and disable the cron job. If you’re not up for that I will reiterate my general advice from earlier: just leave the system alone – let it do its thing and you’ll (almost always) be perfectly fine. Or, get a second ZCell and you can ignore the last several paragraphs entirely.

Now, finally, let’s look at some numbers. The year periods here are a little sloppy for irritating historical reasons. 2018-2019, 2019-2020 and 2020-2021 are all August-based due to Aurora Energy’s previous quarterly billing cycle. The 2021-2022 year starts in late September partly because I had to wait until our new electricity meter was installed in September 2021, and partly because it let me include some nice screenshots when I started writing TANSTAAFL on September 25, 2022. I’ve chosen to make this year (2022-2023) mostly sane, in that it runs from October 1, 2022 through September 30, 2023 inclusive. This is only six days offset from the previous year, but notably makes it much easier to accurately correlate data from the VRM portal with our bills from Aurora. Overall we have five consecutive non-overlapping 12 month periods that are pretty close together. It’s not perfect, but I think it’s good enough to work with for our purposes here.

| YeaR | Grid In | Solar In | Total In | Loads | Export |

|---|---|---|---|---|---|

| 2018-2019 | 9,031 | 6,682 | 15,713 | 11,827 | 3,886 |

| 2019-2020 | 9,324 | 6,468 | 15,792 | 12,255 | 3,537 |

| 2020-2021 | 7,582 | 6,347 | 13,929 | 10,358 | 3,571 |

| 2021-2022 | 8,531 | 5,640 | 14,171 | 10,849 | 754 |

| 2022-2023 | 8,936 | 5,744 | 14,680 | 11,534 | 799 |

Overall, 2022-2023 had a similar shape to 2021-2022, including the fact that in both these years we missed three weeks of solar generation in late summer. In 2022 this was due to replacing the MPPT, and in 2023 it was because we replaced the roof. In both cases our PV generation was lower than it should have been by an estimated 500-600kW. Hopefully nothing like this happens again in future years.

All of our numbers in 2022-2023 were a bit higher than in 2021-2022. We pulled 4.75% more power from the grid, generated 1.84% more solar, the total power going into the system (grid + solar) was 3.59% higher, our loads used 6.31% more power, and we exported 5.97% more power than the previous year.

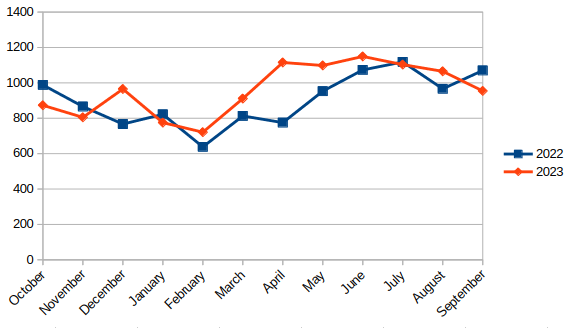

I honestly don’t know why our loads used more power this year. Here’s a table showing our consumption for both years, and the differences each month (note that September 2022 is only approximate because of how the years don’t quite line up):

| Month | 2022 | 2023 | Diff |

|---|---|---|---|

| October | 988 | 873 | -115 |

| November | 866 | 805 | -61 |

| December | 767 | 965 | 198 |

| January | 822 | 775 | -47 |

| February | 638 | 721 | 83 |

| March | 813 | 911 | 98 |

| April | 775 | 1,115 | 340 |

| May | 953 | 1,098 | 145 |

| June | 1,073 | 1,149 | 76 |

| July | 1,118 | 1,103 | -15 |

| August | 966 | 1,065 | 99 |

| September | 1,070 | 964 | -116 |

Here’s a graph:

Did we use more cooling this December? Did we use more heating this April and May? I dug the nearest weather station’s monthly mean minimum and maximum temperatures out of the BOM Climate Data Online tool and found that there’s maybe a degree or so variance one way or the other each month year to year, so I don’t know what I can infer from that. All I can say is that something happened in December and April, but I don’t know what.

Another interesting thing is that what I referred to as “the energy cost of the system” in TANSTAAFL has gone down. That’s the kW figure below in the “what?” column, which is the difference between grid in + solar in – loads – export, i.e. the power consumed by the system itself. In 2021-2022, that was 2,568 kW, or about 18% of the total power that went into the system. In 2022-2023 it was down to 2,347kWh, or just under 16%:

| Year | Grid In | Solar In | Total In | Loads | Export | Total Out | what? |

|---|---|---|---|---|---|---|---|

| 2021-2022 | 8,531 | 5,640 | 14,171 | 10,849 | 754 | 11,603 | 2,568 |

| 2022-2023 | 8,936 | 5,744 | 14,680 | 11,534 | 799 | 12,333 | 2,347 |

I suspect the cause of this reduction is that we didn’t spend two and a half months doing lots of grid charging of the battery in 2022-2023. If that’s the case, this again points to the advisability of just letting the system do its thing and not messing with it too much unless you really know you need to.

The last set of numbers I have involve actual money. Here’s what our electricity bills looked like over the past five years:

| Year | From Grid | Total Bill | Cost/kWh |

|---|---|---|---|

| 2018-2019 | 9,031 | $2,278.33 | $0.25 |

| 2019-2020 | 9,324 | $2,384.79 | $0.26 |

| 2020-2021 | 7,582 | $1,921.77 | $0.25 |

| 2021-2022 | 8,531 | $1,731.40 | $0.20 |

| 2022-2023 | 8,936 | $1,989.12 | $0.22 |

Note that cost/kWh as I have it here is simply the total dollar amount of our bills divided by the total power drawn from the grid (I’m deliberately ignoring the additional power we use that comes from the sun in this calculation). The bills themselves say “peak power costs $X, off-peak costs $Y, you get $Z back for power exported and there’s a daily supply charge of $SUCKS_TO_BE_YOU”, but that’s all noise. What ultimately matters in my opinion is what I call the effective cost per kilowatt hour, which is why those things are all smooshed together here. The important point is that with our existing solar array we were previously effectively paying about $0.25 per kWh for grid power. After getting the battery and switching to Peak & Off-Peak billing, that went down to $0.20/kWh – a reduction of 20%. Now we’ve inched back up to $0.22/kWh, but it turns out that’s just because power prices have increased. As far as I can tell Aurora Energy don’t publish historical pricing data, so as a public service, I’ll include what I’ve been able to glean from our prior bills here:

- July 2023 onwards:

- Daily supply charge: $1.26389

- Peak: $0.36198/kWh

- Off-Peak: $0.16855/kWh

- Feed-In Tariff: $0.10869/kWh

- July 2022 – July 2023

- Daily supply charge: $1.09903

- Peak: $0.33399/kWh

- Off-Peak: $0.15551/kWh

- Feed-In Tariff: $0.08883/kWh

- Before July 2022:

- Daily supply charge: $0.98

- Peak: $0.29852

- Off-Peak: $0.139

- Feed-In Tariff: $0.06501

It’s nice that the feed-in tariff (i.e. what you get credited when you export power) has gone up quite a bit, but unless you’re somehow able to export 2-3x more power than you import, you’ll never get ahead of the ~20% increase in power prices over the last two years.

Having calculated the effective cost/kWh for grid power, I’m now going to do one more thing which I didn’t think to do during last year’s analysis, and that’s calculate the effective cost/kWh of running our loads, bearing in mind that they’re partially powered from the grid, and partially from the sun. I’ve managed to dig up some old Aurora bills from 2016-2017, back before we put the solar panels on. This should make for an interesting comparison.

| Year | From Grid | Total Bill | Grid $/kWh | Loads | Loads $/kWh |

|---|---|---|---|---|---|

| 2016-2017 | 17,026 | $4,485.45 | $0.26 | 17,026 | $0.26 |

| 2018-2019 | 9,031 | $2,278.33 | $0.25 | 11,827 | $0.19 |

| 2019-2020 | 9,324 | $2,384.79 | $0.26 | 12,255 | $0.19 |

| 2020-2021 | 7,582 | $1,921.77 | $0.25 | 10,358 | $0.19 |

| 2021-2022 | 8,531 | $1,731.40 | $0.20 | 10,849 | $0.16 |

| 2022-2023 | 8,936 | $1,989.12 | $0.22 | 11,534 | $0.17 |

The first thing to note is the horrifying 17 megawatts we pulled in 2016-2017. Given the hot water and lounge room heat pump were on a separate tariff, I was able to determine that four of those megawatts (i.e. about 24% of our power usage) went on heating that year. Replacing the crusty old conventional electric hot water system with a Sanden heat pump hot water service cut that in half – subsequent years showed the heating/hot water tariff using about 2MW/year. We obviously also somehow reduced our loads by another ~3MW/year on top of that, but I can’t find the Aurora bills for 2017-2018 so I’m not sure exactly when that drop happened. My best guess is that I probably got rid of some old, always-on computer equipment.

The second thing to note is how the cost of running the loads drops. In 2016-2017 the grid cost/kWh is the same as the loads cost/kWh, because grid power is all we had. From 2018-2021 though, the load cost/kWh drops to $0.19, a saving of about 26%. It remains there until 2021-2022 when we got the battery and it dropped again to $0.16 (another 15% or so). So the big win was certainly putting the solar panels on and swapping the hot water system, with the battery being a decent improvement on top of that.

Further wins are going to come from decreasing our power consumption. In previous posts I had mentioned the need to replace panel heaters with heat pumps, and also that some of our aging computer equipment needed upgrading. We did finally get a heat pump installed in the master bedroom this year, and we replaced the old undersized lounge room heat pump with a new correctly sized unit. This happened on June 30 though, so will have had minimal impact on this years’ figures. Likewise an always-on computer that previously pulled ~100W is now better, stronger and faster in all respects, while only pulling ~50W. That will save us ~438kW of power per year, but given the upgrade happened in mid August, again we won’t see the full effects until later.

I’m looking forward to doing another one of these posts in a year’s time. Hopefully I will have nothing at all interesting to report.

You can actually get the state of maintenance directly from the BMS without needing to guess. I did it using Modbus, because I need to use that protocol anyway to manage my EV charger, but there was a time when I grabbed it from the JSON data.

The script I use/wrote is on GitHub: https://github.com/slamble/redflow-victron-ev. You might find it useful in figuring out how to fix that flaw in your logic.

Cheers.

Oh, brilliant! Thanks Stuart 🙂